In the world of Kubernetes, where everything evolves quickly. Automated storage scaling stands out as a critical challenge. Members of the Data on Kubernetes Community have proposed a solution to address this issue for Kubernetes operators.

If, like me, this is your first time hearing about Automated storage scaling, this will help you understand it better:

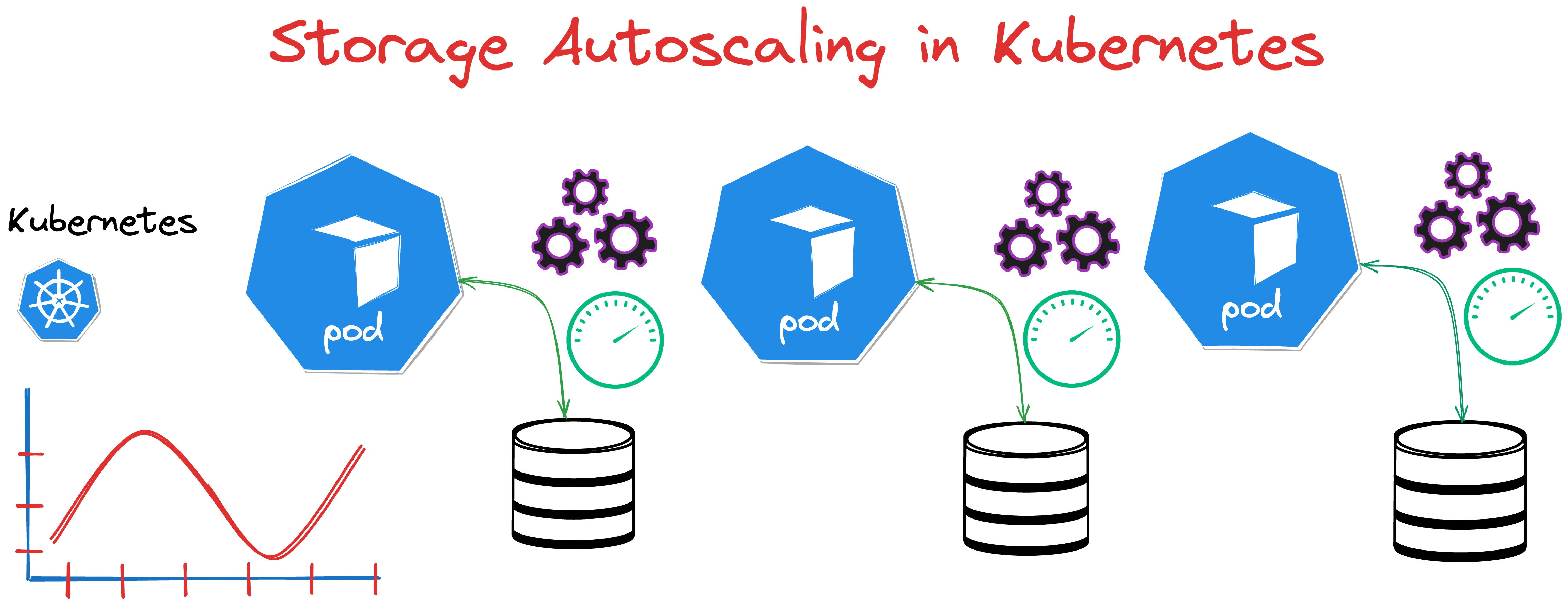

Storage scaling in Kubernetes Operators refers to the ability of an application running on Kubernetes to adjust its storage capacity automatically based on demand. In other words, it is about ensuring that an application has the right amount of storage available at any given time, optimizing for performance, cost, and operational efficiency, and doing this as automatically as possible.

As databases grow increasingly integral, the absence of unified solutions for storage scaling is becoming more evident. Let’s explore some existing solutions and their limitations:

pvc-autoresizer

This project detects and scales PersistentVolumeClaims (PVCs) when the free amount of storage is below the threshold. pvc-autoresizer It is and active open source project on GitHub.

There are certain downsides:

- Works with PVCs only. It does not work with StatefulSet and does not have integration with Kubernetes Operator.

- It requires Prometheus stack to be deployed.

Percona wrote a blog post about pvc-autoresizer to automate storage scaling for MongoDB clusters on Kubernetes.

EBS params controller

This controller provides a way to control IOPS and throughput parameters for EBS volumes provisioned by EBS CSI Driver with annotations on corresponding PersistentVolumeClaim objects in Kubernetes. It also sets some annotations on PVCs backed by EBS CSI Driver representing current parameters and last modification status and timestamps.

Find more about EBS params controller on GitHub.

Kubernetes Volume Autoscaler

This automatically increases the size of a Persistent Volume Claim (PVC) in Kubernetes when it is nearing full (either on space OR inode usage). It is a similar solution to pvc-autoresizer. Check out more about Kubernetes Volume Autoscaler

Kubernetes Event-driven Autoscaling(KEDA)

KEDA performs horizontal scaling for various resources in k8s, including custom resources. The metric tracking component is already figured out, but unfortunately, it does not work with vertical scaling or storage scaling yet. We opened an issue in GitHub to start the discussion.

As you can see, there are some limitations to performing Automated storage scaling, and to address this gap, the Data on Kubernetes community wants to develop a solution that solves practical problems and contributes to the open source community.

We’re tackling the significant challenge of unexpected disk usage alerts and potential system shutdowns due to insufficient volume space, a common issue in Kubernetes-based databases.

Possible Solutions

The following possible solutions were proposed:

- Operators must be capable of changing the storage size when Custom Resource is changed.

- Operators must create resources following certain standards, like applying annotations with indications of which fields should be changed

- 3rd party component (Scaler) will take care of monitoring the storage consumption and changing the field in the CR of the DB

Our goal as a community is to develop a fully automated solution to prevent these inconveniences and failures.

Final Thoughts

Once a new solution is validated and proven functional, it will benefit many communities, enabling them to integrate it with their operators. Additionally, it will present an excellent opportunity for Percona to incorporate it into our Operators, enhancing efficiency and facilitating automated storage scaling.

We invite those interested, especially in this particular project, to join us. This is an opportunity to be at the forefront of shaping the automated scaling solutions in Kubernetes. You can join the Data on Kubernetes community on Slack, specifically on the #SIG-Operator.

Are you interested in understanding Storage Autoscaling in databases? Explore our detailed example of Storage Autoscaling using the Percona Operator for MongoDB. For questions or discussions, feel free to join our experts on the Percona Community Forum ∎

Discussion

We invite you to our forum for discussion. You are welcome to use the widget below.